Acoustic QR Code

6 May 2016Progress: Experiment

This is a silly implementation of a not-so-silly idea proposed by ixnaum on the halfbakery. A modem app would let you share a URL over a phone call in the same way that QR codes let you share them visually.

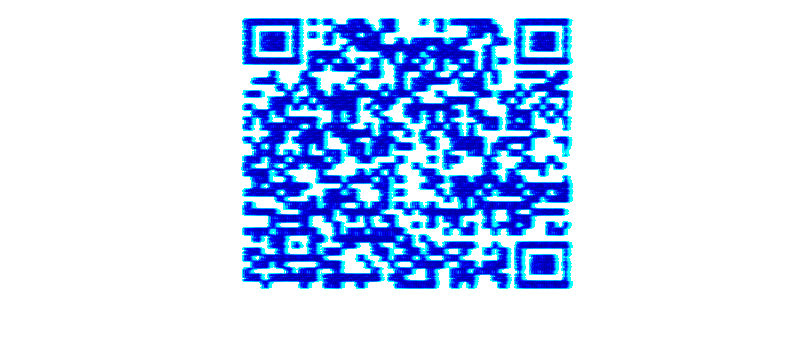

But it made me wonder if you could send an actual QR code over sound. We can generate the image using QR code libraries, then scan through and fourier transform it into a waveform. At the receiving end we fourier transform back and plot a spectrogram. The clever bit is we can apply the existing QR-scanning algorithms on that image - which does all the alignment and error checking for us. Hopefully.

Here's the demo. It runs in your browser by using the Javascript QR Code reader by Lazar Laszlo and QR code generator by davidshimjs. (You will need a browser that supports getUserMedia.)

» Sender

» Receiver

You can either send on one device and receive on another, or simply open two windows and hold your computer's microphone to its speakers. Alternatively you can try receiving the data on a phone. With the right settings, it's possible to send data from a desktop computer to a phone at the other end of a room.Type a message in the sender and hit 'Play' to hear it as a fourier-transformed QR code.

Click 'Listen' on the receiver, and you should see a spectrogram of the audio it hears. When the you have a clear QR code in view, hit 'Decode' to try and interpret it.

Tips for getting it to work

Once you've got this set up it can be quite reliable, but until then it can be quite fiddly to get the transmitter and receiver working correctly.

- Try to find a speaker / microphone arrangement where there are no faint vertical bands. It's very sensitive to orientation, moving just slightly can completely change the frequency response.

- Try moving the microphone further away and turning the volume up. With stereo speakers, only sending on one channel might improve transmission.

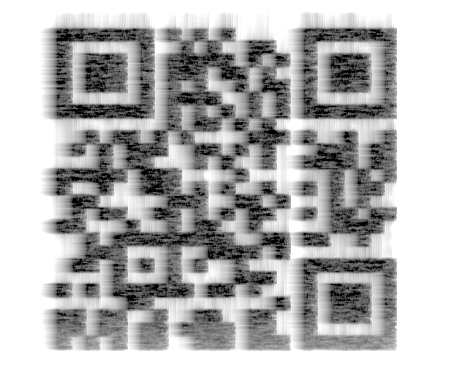

- Adjust the width and offset so that the message is in a fairly linear region. Frequencies too high or too low may be out of the range of the speaker or microphone. You want to try and get the result to be a uniform intensity/blackness.

- If there are unavoidable vertical bands on the image, try to avoid having them pass through the three alignment squares.

- The receiver might run at a different speed to the sender, so make sure the height is correct to give a square image. Although the decoder does some perspective correction, it struggles with anything too rectangular.

I might play around with the decoding some more to make it more reliable, but of course I have no delusions about this being a viable protocol. I just think it's reaaallly cool.

Video

In this quick demo, the phone was about 4 metres from the desktop speakers. My phone runs the receive program very slowly, so I had to transmit extra slowly (increase the height) for it to appear square.

More info

You can cheat / test this by directly sending the audio data to the receiver, either by connecting a cable between line in / line out, or through software (on windows, selecting 'stereo mix' as the microphone source). This gives almost perfect transmission.

With actual audio transmission, I found it was best to apply a small amount of blurring. This is done by a square convolution, as in, run through the image and set each pixel to the average of its surrounding pixels. I also made it auto-level the image, this is done by calculating a histogram, then integrating to find the central 80% of the pixels, then stretching the image data so these span the full range of intensities.

Blurring / leveling seems to work very well. One last thing I added was a test to see if there are any obvious notch bands. This looks for columns of pixels that, after leveling, have no black pixels, and darkening these columns until they do. This only applies to bands through the middle, if there are notch bands at the sides it won't know what's noise and what's data - so it's important to change the width/offset to ensure the edges of the code are clear.

By the way, if you want to use other software to plot the spectrogram, make sure you set the scaling to linear, not logarithmic.

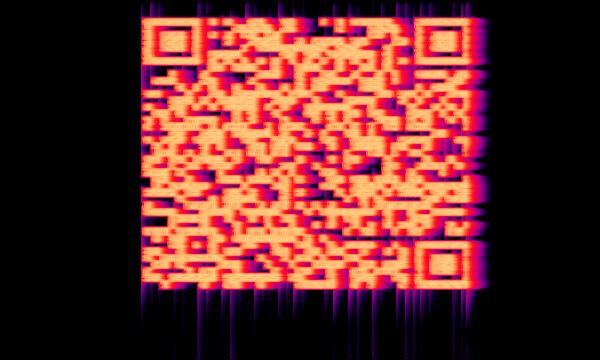

The spectrum originally used the built-in fourier transform of the browser. This, I expect, runs much faster because it's compiled code. However it's intended for visualizations, and I soon realized that the window function was way too smeared out and every peak ended up with a long tail. The coloured spectrum at the top displays this effect. Implementing our own FFT with rectangular windowing means a much crisper output. I also switched from using the analyser nodes to the scriptProcessor node which hopefully won't miss entire buffers out at random.

But even the smeared out image at the top, with some levels adjustment in photoshop, can be made readable:

Phones

The biggest problem with running this on a phone is, if your phone is a few years old like mine, it doesn't have the power to plot a realtime spectrogram without missing frames. Increasing the buffer size improves on this, but having a selectable buffer size comes with its own problems regarding the image size. A possible option is to record the data in one go, and plot the spectrum after. This would of course be more boring while the sound is transferred.Missing buffers mean the image is stretched/squeezed vertically in random places, and this is not the type of transform the QR reader is expecting. Possibly we could replace the algorithms with something more appropriate.

As for transmitting this data down a phone line, I suspect this isn't possible (although I haven't tried it). Phone lines are extremely squeezed in terms of bandwidth (vocoders etc) and anything that doesn't sound like a human voice gets destroyed. Damn those pesky phone companies trying to save bandwidth!

Data rate

Currently I can pretty consistently transmit a 55 byte message in the default time of 8.5 seconds, which is about 50 bits per second. So about a thousand times worse than a dial up modem. But, dial up modems were refined over decades to get the best performance, whereas I wrote this in a single afternoon.I may try and find what the best data rate I can get is using a direct audio cable between the devices, I expect I might be able to get into the kilobits.

Incidentally I would recommend reading the wiki page on dial-up modems. They are actually incredible bits of engineering. Dial-up speeds might not sound like much, but remember that phone lines only have about 3kHz of bandwidth, so pushing it to 56kbps is insane.

Fourier transforms and reverse spectrograms

This is not my first foray into round-trip fourier transforming. My biggest interest is in its applications for music synthesis, and I don't just mean the weird noises. But weird noises too.While trying to glue the FFT to the QR code maker, I went through a few stages. First of all I generated white noise, and used the generated image to blank various components. This actually works perfectly, except for the continuously randomized phase components causing discontinuities with each buffer fill. Makes it quite clicky.

Much better to randomize the components initially, and propagate them maintaining constant magnitudes. We then copy/attenuate these components into the FFT. Twas the thought anyway, and it works perfectly. There was, however, a face-palming moment in this development. Each of those components is, by definition, an integer multiple period of the buffer size. That is to say, after propagating for a full buffer they will all be back where they started. In other words: we don't need to propagate them at all. Moments like this make me feel like a buffoon, and I almost want to delete the old reverse spectrogram experiments page out of embarrassment.

Oh well. We can make up for it now by providing a working, generic, reverse spectrogram image program:

» Reverse Spectrogram Mk II

Hooray! It works so well, I might post some more on this later.